Self-Study Note - Optimal and Learning-Based Control (AA 203)

The following are my self-study notes for the PhD preparatory course, Optimal and Learning-Based Control (AA 203). This content is intended solely for personal review and to motivate myself. Please email me at 12011126@mail.sustech.edu.cn to report any issue, and the original course metarials are at https://stanfordasl.github.io/aa203/sp2223/.

Content

- Lec 01 Introduction

- Lec 02 Nonlinear optimization theory

- Lec 03 Pontryagin’s maximum principle and indirect methods

Lecture 01 - Introduction

Click here to visit the online slides.

1.1 Dynamical System

For a continuous process, we have: Time \(t\in R\), State \(x(t)\in R^n\), Control Input \(u(t)\in R^m\). Dynamics \(\dot{x}(t) = f (t,x(t), u(t))\), and the Trajectories \(x:t\mapsto x(t), u:u\mapsto u(t)\), where \(f\) is sufficiently for each initial condition.

It can be written in matrix format, more generally, for a multi dimensions circumstance, it is

\[\left( \begin{matrix} \dot{s} \\ \dot{v} \end{matrix} \right) = \left[ \begin{matrix} 0 & I \\0 & 0 \\ \end{matrix} \right] \left( \begin{matrix} s \\ v \end{matrix} \right)+ \left[ \begin{matrix} 0 \\ I \end{matrix} \right] u\]The Proportional-derivative (PD) feedback is represented as:

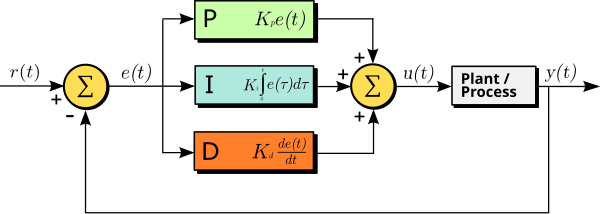

\[u = -k_ps-k_dv \Rightarrow \left( \begin{matrix} \dot{s} \\ \dot{v} \end{matrix} \right) = \left[ \begin{matrix} 0 & I \\ -k_p & -k_d \\ \end{matrix} \right] \left( \begin{matrix} s \\ v \end{matrix} \right)\]PID/PD: A common feedback-based control loop mechanism commonly used to manage machines and processes and process that require continuous control and automatic adjustment.

- The proportional (P) component responds to the current error value by producing an output that is directly proportional to the magnitude of the error.

- The integral (I) component, in turn, considers the cumulative sum of past errors to address any residual steady-state errors that persist over time, eliminating lingering discrepancies.

- The derivative (D) component predicts future error by assessing the rate of change of the error, which helps to mitigate overshoot and enhance system stability, particularly when the system undergoes rapid changes.

The PID controller reduces the likelihood of human error and improves automation.

1.2 Stability and Lyapunov Function

1.2.1 The mathematical definitions of Stability

For a projection \(\dot{x}=f(x)\), and an equilibrium point \(\bar{x}\in R^n\), the stability of a system means the following, where the parameters are compact values.

1.) Marginal/Lyapunov: Trajectories that start close to the equilibrium remain close to the equilibrium.

\[\forall\epsilon>0,\exists\delta>0: \|x(0) - \bar{x}\|<\delta \Rightarrow \|x(t)-\bar{x}\|<\epsilon,\forall t\ge0\]2.) Asymptotic (local): Trajectories that start near the equilibrium converge to it.

\[\exists\delta>0:\|x(0)-\bar{x}\|<\delta \Rightarrow \lim_{t\to\infty}\|x(t)-\bar{x}\|=0\]3.) Exponential (local): Trajectories that start near the equilibrium converge to it exponentially fast.

\[\exists\delta,c,\alpha>0:\|x(0)-\bar{x}\|<\delta\Rightarrow\|x(t)-\bar{x}\|\le ce^{-\alpha t}\|x(0)-\bar{x}\|\]Take the compact value \(\delta\to\infty\) to get the global definitions. For Linear Time-Invariant (LTI) system, “Asymptotic = Exponential” and “local = global” always.

1.2.2 Lyapunov Function

1.) Lipschitz continuity

For a function on real set \(f:D\in R\to R\), it can be represented as \(\|f(a)-f(b)\|\le K\|a-b\|, \forall a,b\in D\).Moreover, given two metric spaces \((X, d_X)\) and \((Y, d_Y)\), where \(d_X\) denotes the metric on the set \(X\) and \(d_Y\) is the metric on set \(Y\), a function \(f:X\to Y\) is called Lipschitz continuous if there exists a rea; constant \(K\ge 0\) such that, for all \(x_1, x_2 \in X\)

\[d_Y(f(x_1),f(x_2))\le K d_X(x_1,x_2)\]Any such \(K\) is referred to as a Lipschitz constant for the function \(f\) and \(f\) may also be referred to as K-Lipschitz. The smallest constant is sometimes called the (best) Lipschitz constant of \(f\) for the dilation or dilatation of \(f\).

2.) Lyapunov function & directly method

The function which equilibrium point at \(x=0\), used to describe a time-invariant/autonomous system.

Consider a continuous scalar function \(V(x)\) that is 0 at the origin and positive (\(V(x)\ge 0, V(x)=0\Leftrightarrow x=0\)) elsewhere in some ball enclosing the origin. What’s more, the\(\dot{V}\) is negative definite, like \(\nabla V(x)^Tf(x)\le 0, \nabla V(x)^Tf(x)=0 \Leftrightarrow x=0\).

Then \(\bar{x}=0\) is the locally asymptotically stable, \(V(0)\) is the equilibrium point. In addition, if \(V\) is radially unbounded and \(V(x)\to\infty\) as \(\|x\|\to\infty\), then \(\bar{x}=0\) is globally asymptotically stable as well.

3.) Converse Lyapunov theorem

It is the converse statement of Lyapunov directly method. If \(\bar{x}=0\) is the locally asymptotically stable equilibrium, with the region of attraction \(\mathcal{A}\subset R^n\), then for \(V\in \mathcal{C}^1(R^n,R)\) such that:

- The \(V\) is positive definite on \(\mathcal{A}\)

- The \(\dot{V}\) is negative-definite on \(\mathcal{A}\)

- The \(V(x)\to \infty\) as \(x\to\partial\mathcal{A}\)

- The \(\{x\|V(x)\le c\}\) is a compact subset of \(\mathcal{A}\) for any \(c>0\).

- If \(\bar{x}=0\) is globally equilibrium, then \(V\) is radially unbounded.

1.3 Optimal Control Problem

Basically, it is a optimal process, with objective functions subject to convex or concave constraints. In control field, it can be represented as the follow.

For a continuous-time process, the objective/cost function (terminal+stage) is

\[\min_{x,u} J(x,u) := \ell_T(T,x(T)) + \int_{0}^{T} \ell(t,x(t),u(t)) dt\]which subject to the following constraints:

- dynamical feasibility: \(\dot{x}=f(t,x(t),u(t)),\forall t\in[0,T]\)

- boundary conditions: \(x(t_0)=x_0, x(T)\in\mathcal{X}_T\)

- state constraints: \(x(t)\in\mathcal{X}, \forall t\in[0,T]\)

- input constraints: \(u(t)\in\mathcal{U}, \forall t\in[0,T]\)

There’s one difference,

- Open-loop input: \(u^*(t)\) for specific initial state \(x_0\)

- Closed-loop input: \(u^*(t)=\pi^*(t,x(t))\)

Lecture 02 - Nonlinear optimization theory

Click here to visit the online slides. Click here to visit another online notes.

2.1 Unconstrained Optimization

- First order necessary optimality condition: let \(x^*\) to be a local minimum, then \(\nabla f(x^*)=0\)

- Second order necessary optimality condition (NOC): let \(x^*\) to be a local minimum, then \(\nabla^2 f(x^*)\succeq 0\), which is positive semi-definite.

- Second order sufficient optimality condition (SOC): let \(x^*\) to be a local minimum, then \(\nabla f(x^*)=0\) and \(\nabla^2 f(x^*)\succ 0\), which is positive definite.

- The Hessian matrix is positive definite, which means that the function is convex.

- For an uncontrained optimization problem, if \(\nabla f(x^*)=0\) and \(\nabla^2 f(x^*)\succ 0\), then

\(f(x^*+\Delta x) - f(x^*) \approx \frac{1}{2}\Delta x^T\nabla^2 f(x^*)\Delta x > 0\)

- Convexity: A function is convex if its Hessian is positive semi-definite, and strictly convex if its Hessian is positive definite.

- Quaratric \(f(x) = x^TQx\), where \(Q \succeq 0\), is convex.

- Affine \(f(x) = Ax+b\), both convex and concave.

2.2 Descent Methods for Unconstrained Optimization

Start at an initial guess \(x_0\), and iteratively update the guess \(x_k\) to \(x_{k+1}\), until the convergence criteria is met, i.e. \(f(x^{(k+1)})-f(x^{(k)})\le\epsilon, \forall k\in\{0,1,2,\dots\}\)

2.2.1 Consider to update rule:

\(x^{(k+1)} = x^{(k)} + \alpha^{(k)}d^{(k)}\) where \(\alpha^{(k)}\) is the step size, and \(d^{(k)}\) is the descent direction. Then \(f(x^{(k+1)}) \approx f(x^{(k)}) + \alpha^{(k)} \nabla f(x^{(k)})^Td^{(k)}\) The goal is to choose \(\alpha^{(k)}\) and \(d^{(k)} \in R\) such that this approximation is appropriate and \(\nabla f(x^{(k)})^Td^{(k)}<0\)

2.2.2 Gradient Descent directions:

- Constant: choose \(\alpha^{(k)}\equiv \alpha >0\). convergence can be slow, or the iterates could diverge if \(\alpha\) is too large.

- Diminishing: Ensure \(\alpha^{(k)}\to 0\), and \(\sum_{k=0}^{\infty} \alpha^{(k)}=\infty\). This does not guarantee descent at each iteration, but avoid diverging iterates.

- Line search: Given the current iterate \(x^{(k)}\) and descent direction \(d^{(k)}\), compute \(a^{(k)} = \arg\min_{\alpha>0}f(x^{(k)}+\alpha d^{(k)})\) excatly if possible. Otherwise, do backtraching line search, like:

- initialize \(\alpha^{(k)}=1\)

- while \(f(x^{(k)}+\alpha d^{(k)})> f(x^{(k)})+\gamma\alpha^{(k)}\nabla f(x^{(k)})^Td^{(k)}\)

- do \(\alpha^{(k)} \gets \beta\alpha^{(k)}\), where \(\gamma\in\{0,0.5\}, \beta\in(0,1)\) are hyper-parameters.

2.3 Equality-constrained optimization

Define the Lagrangian function:

\[L(x,\lambda) :=f(x)+\lambda^Th(x)=f(x)+\sum_{i=1}^m \lambda_ih_i(x)\]Then for a local minimum (convex fuction), assume the constraints are linearly independent, there exists a unique \(\lambda^*\) such that

\[\nabla_xL(x^*,\lambda^*) = \nabla f(x^*)+\sum_{i=1}^m\lambda_i^*\nabla h_i(x^*)=0\]2.4 Inequality-constrained optimization

The goal is to \(\min f(x)\), subject to \(h(x)=0, g(x)\prec 0\). For any feasible point \(x\), i.e., such that \(h(x)<0, g(x)\preceq 0\), define the set of active Inequality constraints (linearly independent) by

\[\mathcal{A}_g(x):=\{j\in\{1,2,\dots,r\}\|g_j(x)=0\}\] \[L(x,\lambda,\mu):=f(x)+\lambda^Th(x)+\mu^Tg(x)=f(x)+\sum_i^m\lambda_ih_i(x)+\sum_j^r\mu_jg_j(x)\]Via the first order NOC, we have:

\[\nabla_xL(x^*,\lambda^*,\mu^*)=0, \mu^*\succeq 0, \mu_j^*=0, \forall j\notin\mathcal{A}_g(x^*)\]Also could be written as \(\mu_j^*g_j(x^*)=0, \forall j\in\{1,2,\dots,r\}\)

KKT conditions: For a local minimum, there exists a unique \(\lambda^*,\mu^*\) such that

\[\nabla_xL(x^*,\lambda^*,\mu^*)=0, \mu^*\succeq 0, g(x^*)\preceq 0, \mu^{*,T}g(x^*)=0\]Lecture 03 - Pontryagin’s maximum principle & indirect methods

Click here to visit the online slides.

3.1 Fitz John first-order NOCs

For a optimizing process with equality and inequality constraints, there exist the local minimum such that:

\[(\eta,\lambda^*,\mu^*)\neq0\] \[-\nabla_x L_\eta(x^*,\lambda^*,\mu^*)\perp_{x^*} \mathcal{S}\] \[\mu_j^*\ge0,\mu_j^*g_j(x^*)=0, \forall j\in\{1,2,\dots,r\}\]We call \(L_\eta(x,\lambda,\mu)\) is the partial Lagrangian, like:

\[L_\eta(x,\lambda,\mu):=\eta f(x)+\lambda^Th(x)+\mu^Tg(x)\]Corollary: If \(\mathcal{S}=R^n\) and the LICQ(KKT) holds, then \(\eta=1\) and \(\nabla_xL_1(x^*,\lambda^*,\mu^*)=0\)

3.2 Weak Pontryagin max principle

Tips:

- Most systems that are used to position or to move an object are aiming to reach a desired position with an acceptable precision (position certainty).

- In general, open-loop control in motion systems means that there is no position feedback of a moving object.

- Closed-loop control means that there is some kind of position information that is fed back to the motion controller of a system and that is used in the positioning process.

3.2.1 Discrete-time circumstance

Consider the discrete=time optimal control problem, which means that the constraints are set of status.

An optimal control \(u^*=\{u_t^*\}^{T-1}_{t=0}\) for a specific initial state \(\bar{x}_0\) , is an open-loop input. And for the form \(u^*_t=\pi^*(t,x_t)\) is a closed-loop input.

3.2.2 Continuous-time circumstance

Consider the continuous-time optimal control problem, which means that the constraints are set of time.